In this post I thought I would update an article I wrote last year that provides an intro to photogrammetric workflows and some thoughts on the latest technology. Originally published last May in GIS Development, this version has updated content and I've also added in links to further information on the various topics discussed throughout the article.

Hope you enjoy!

Introduction

The photogrammetric workflow has been relatively static since the advent of digital photogrammetry. Numerous application tools are dedicated to various parts of the workflow but the actual photogrammetric tasks have seen little change in recent years. However, we are beginning to see changes in the workflows. The growing proliferation of “new” technologies such a LIDAR, pushbroom, and satellite sensors has caused many commercial vendors to re-examine the application tools they offer. In addition, advances in information technology have opened up the possibility to processing increasingly large quantities of data. This, coupled with improved processing capabilities and network bandwidth, are also causing a change in traditional photogrammetric workflows.

Background

ERDAS has a long history in providing both analytical and digital photogrammetry solutions. As a Hexagon company, ERDAS’ mapping legacy dates back to the 1920’s with the founding of Kern Aarau and Wild Heerbrugg. These companies were consolidated into Leica and over the years offered analogue, analytical, and digital photogrammetry and mapping solutions. LH Systems, ERDAS, and Azimuth Corp. were acquired by Leica Geosystems in 2001. These acquisitions allowed Leica to enter a number of spaces in the digital photogrammetry market and offer comprehensive photogrammetric solutions to the production photogrammetry, defense, and GIS markets.

ERDAS’ initial photogrammetric offerings, Orthobase and Stereo Analyst for IMAGINE, were targeted at the GIS user community. As demand for 3D data grew in the GIS community, Leica Geosystems sought to provide easy to use tools for producing “oriented” images from airborne or satellite data and extracting 3D information such as building and road data. With the acquisition of LH Systems in 2001, Leica Geosystems inherited a staff and customer base skilled in production photogrammetry. This new customer base required engineering-level accuracy and primarily worked with large-scale airborne photography in the commercial arena and satellite imagery in the defense market. In early 2004 Leica Geosystems released the Leica Photogrammetry Suite (now LPS). This new product suite initially used updated components from OrthoPase and OrthoBase Pro, and developed new technology for stereo viewing and terrain editing. Shortly thereafter mature products such as PRO600 and ORIMA were integrated into the product suite and numerous update releases increased productivity. In April 2008, Leica Geosystems Geospatial Imaging division was re-branded as ERDAS.

Current Workflows

When asked about the “photogrammetric workflow” most industry professionals will refer to the analog frame camera (e.g. RC30) workflow. Analog frame cameras were prevalent during the transition to digital photogrammetry and still remain a common source of imagery. Numerous software tools have been developed to guide users through the traditional analog frame workflow. Popular vendors include BAE, INPHO (now owned by Trimble), Intergraph, and ERDAS. A brief outline of the mainstream analog frame workflow is provided below.

· Scanning process: Airborne camera film is scanned and converted into a digital file format. Some high performance scanners perform interior orientation (IO) as well.

· Image Dodging: Scanning may introduce radiometric problems such as hotspots (bright areas) and vignetting (dark corners). These can be minimized or reduced by applying a dodging algorithm. Dodging, in the digital photogrammetry sense of the word, generally calculates a set of input statistics describing the radiometry of a group of images. Then, based on user preferences, it generates target output values for every input pixel. Output image pixels are then shifted based on several user parameters and constraints from their current DN value to their target DN. Typically there are options for global statistics calculations for a group of images, which has the net effect of balancing out large radiometric differences between images. Overall this has the effect of resolving the aforementioned problems and “evening out” the radiometry both within individual images and across groups of imagery.

· Project setup: most photogrammetric packages have an initial step where the operator performs steps such as defining a coordinate system for the project, adding images to the project, and providing the photogrammetric system with general information regarding the project. Ancillary information may include data such as flying height, sensor type, the rotation system, and photo direction.

· Camera Information: the operator needs to provide information about the type of camera used in the project. Typically the camera information is stored in an external “camera file” and may be used many times after it is initially defined. It contains information such as focal length, principal point offset, fiducial mark information, and radial lens distortion. Camera file information is typically gathered from the camera calibration report associated with a specific camera.

· Interior Orientation (IO): The interior orientation process relates film coordinates to the image pixel coordinate system of the scanned image. IO can often be performed as an automatic process if it was not performed during the scanning process.

· Aerial Triangulation (AT): The AT process serves to orient images in the project to both one another and a ground coordinate system. The goal is to solve the orientation parameters (X, Y, Z, omega, phi, kappa) for each image. True ground coordinates for each measured point will also be established. The AT process can be the most time-consuming and critical component of the digital photogrammetry workflow. Sub-components of the AT process include:

o Measuring ground control points (typically surveyed points).

o Establishing an initial approximation of the orientation parameters (rough orientation).

o Measuring tie points. This is often an automatic procedure in digital photogrammetry systems.

o Performing the bundle adjustment.

o Refining the solution: this involves removing or re-measuring inaccurate points until the solution is within an acceptable error tolerance. Most commercial software packages contain an error reporting mechanism to assist in refining the solution.

- Terrain Generation: Digital orthophotos are one of the primary end-products in the photogrammetric workflow. Accurate terrain models are an essential ingredient in the generation of digital orthophotos. They are also useful products in their own right, with uses in many vertical market applications (e.g. hydrology modeling, visual simulation applications, line-of-sight studies, etcetera). Terrain models can take the form of TINs (Triangulated Irregular Network) or Grids. Once AT is complete, terrain generation can typically be run as an automatic process in most photogrammetric packages. Automatic terrain generation algorithms typically match “terrain points” on one two or more images (more images increase the reliability of the point). Seed data such as manually extracted vector files, control points, or other data can often be input to help guide the correlation process. There are usually filtering options to remove blunders, also referred to as “spikes” or “wells” in the output terrain model. Filtering can also be used to assist in the removal of surface features such as buildings and trees. This can be of great assistance if the desired output is a “bare-earth” terrain model. It is important to note that terrain may also be acquired via manual compilation (in stereo), LIDAR, IFSAR (Interferometric Synthetic Aperture Radar), or publicly available datasets such as SRTM.

- Terrain Editing: Digital terrain models (DTMs) that have been generated by autocorrelation procedures typically require some “cleanup” activities to model the terrain to the required level of accuracy. Most photogrammetric packages include some capability of editing terrain in stereo. It is important for operators to see the terrain graphics rendered over imagery in stereo so that they can determine if automatically generated terrain posts are indeed “on the ground”. That is, that the DTM is an accurate representation of the terrain, or is at least accurate enough for the specific project at hand. Terrain can usually be rendered using a mesh, contours, points, and breaklines. The operator usually has control over which rendering method is used (it could be a combination) as well as various graphic details such as contour spacing, color, line thickness and more. Terrain editing applications usually provide a number of tools for editing TIN and Grid terrain models. In addition to individual post editing (e.g. add, delete, move for TIN posts, adjust Z for Grid cells), area editing tools can be used for a number of operations. These may include smoothing, surface fitting operations, spike and well removal tools, and so on. Geomorphic tools can be used for editing linear features such as a row of trees or hedges. After a terrain edit has been performed, the system will update the display in the viewer so that the operator can assess the accuracy and validity of the edit. Once the editing process is complete the user may have to convert it into a customer-specified output format (e.g. one TIN format to another, or TIN to Grid). DTMs are increasingly a customer deliverable and product, as mentioned previously they have many uses and are becoming quite widespread in various applications.

- Feature Extraction: Planimetric feature extraction is usually an optional step in the workflow, depending on the project specifications. Automatic 3D feature extraction algorithms are under development, but manual stereo extraction is still the predominant method. Feature extraction tools in digital photogrammetry packages typically allow users to collect, edit and attribute point, line, and polygonal features. Features can be products in themselves, feeding into a 3D GIS or CAD environment. Alternatively building futures may be used again in the photogrammetric processing chain in the production of “true orthos”, which take surface features into account to produce imagery with minimized building lean – which can be particularly beneficial in urban environments.

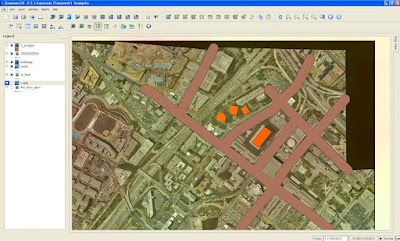

- Orthophoto Generation and Mosaicing: Digital Orthophotos are usually the primary final product derived from the photogrammetric workflow. There are many different customer specifications for orthos, including accuracy, radiometric quality, GSD, output tile definitions, output projection, output file format and more. A mosaicing process is usually included in the ortho workflow to produce a smooth, seamless, and radiometrically appealing product for the entire project area. Mosaicing may be performed as part of the orthophoto process directly (ortho-mosaicking) or performed as post-process later on. Generally, orthophoto production follows these steps:

- Input image selection: the operator chooses the images to be orthorectificed.

- Terrain source selection: the operator chooses the DTM to be used for orthorectification. This is a critical step, as the accuracy of the orthophoto will be determined by the accuracy of the terrain. A terrain model with gross errors (e.g. a hill not modeled correctly) will result in geometric errors in the resulting orthophoto.

- Define orthophoto options: Operators typical select a number of option for the orthorectification process. These may include output GSD, the image resampling method, projection, output coordinates and more.

Aside from defining the various parameters, the orthorectification process is not usually an interactive process.

However, the mosaicing process usually does involve some degree of operator interaction.

After images are chosen for the mosaic process, there is usually some method of defining seams (polygons or lines used to determine which areas of the input images will be used in the output mosaic).

While there are many automatic seam generation applications, there is almost always some element of user interaction to either define or edit seams – or at least review the seams.

Operators will typically edit the seams so that they run along radiometrically contiguous areas.

That is, they do not cut through well-defined features such as buildings.

This is because the ultimate goal of seam editing is to “hide” the seams so that they are not visible in the output mosaic.

Once seams are defined, they can usually have smoothing or feathering operations applied to them so that their appearance is minimized.

Another important aspect is radiometry.

While some operators will tackle radiometry early on in the workflow (as previously discussed in the “Image Dodging” step), others will dodge or apply other radiometric algorithms during the orthomosaic production process.

The goal is to make the output group of images radiometrically homogeneous.

This will result in a visually appealing output mosaic that has consistent radiometric qualities across the group of images comprising the project area.

A project area may be several hundred square kilometers in size, so a single output mosaic file is not usually an option due to the sheer size.

End customers cannot usually handle a single large file and would prefer to receive their digital orthomosaic in a series of tiles defined by their specification.

Most photogrammetric systems have a method of defining a tiling system that can be ingested by the orthomosaicing application to produce a seamless tiled output product.

In recent years the introduction of high resolution satellite imagery and airborne pushbroom sensors such as the ADS40 have added new variations to the traditional workflow. Both types of sensors product data that are digital from the point of capture, alleviating the need to scan film photography. Commercially available satellite imagery (e.g. CARTOSAT, ALOS, etcetera) has been available at increasingly high levels of resolution (e.g. 80cm resolution for CARTOSAT-2). While this is sufficient for many mapping projects, some engineering level project applications still require the resolution available from airborne sensors.

Pushbroom sensors such as the ADS40 can achieve a ground sample distance in the 5-10cm range. Modern digital airborne sensors are also usually mounted with a GPS/IMU system. GPS (Global Positioning System) technology assists mapping projects by using a series of base stations in the project area and a constellation of satellites providing positional information accessed by the GPS receiver on-board an aircraft. IMU’s (Inertial Measurement Unit) are increasingly used to establish precise orientation angles (pitch, yaw, and roll) for the sensor platform in relation to the ground coordinate system. GPS and IMU information can be extremely beneficial for mapping areas where limited ground control information is available (e.g. rugged terrain). They also assist in the triangulation process by providing highly accurate initial orientation data, which is then further refined by the bundle adjustment procedure. GPS and IMU information can also be used for “direct georeferencing”, which bypasses the time-consuming AT process. However, direct georeferencing is not a universally-accepted methodology within the mapping community. The caveat to direct georeferencing is that project accuracy may suffer – however this may be acceptable for rapid response mapping and other types of projects where lower accuracies are adequate for the end customer.

Thoughts on Current and Future Photogrammetric Workflows

We are beginning to see some shifts in the currents guiding photogrammetric workflows. These shifts are being driving by advances in computing hardware, new sensor technology, and enterprise solutions.

Data storage and dissemination is dynamic area in the industry. While imagery was traditionally backed up on tape systems, the cost of storage has dramatically declined in recent years. As customer demand for high-resolution data increases, it is becoming less practical for users to store data directly on their workstations. Users are increasingly storing imagery on servers, employing different methods for accessing it. Demand appears to be in the increase for tools to manage and archive data. Organizations are also examining the possibility of sharing and publishing data. The data may stored on servers and published via web services or made available for access, subscription, or purchase via a portal.

Sensor hardware is also rapidly changing the photogrammetric workflow. LIDAR has now been widely adopted and accepted, providing extremely high-density and high-accuracy terrain data. In addition to LIDAR, there is a growing trend of integrating LIDAR with digital frame sensors, which enables the simultaneous collection of optical and terrain data, enabling rapid digital orthophoto processing. This is much more cost-effective than flying a project area with multiple sensors for image and terrain data. When coupled with airborne GPS and IMU technology, terrain and georeferenced imagery – the primary ingredients for orthos – can be available shortly after the data is downloaded after a flight. IFSAR mapping systems are also a growing source of terrain data.

Coupled with explosion of imagery is the need to efficiently process it. One method that researchers and software vendors have begun exploring is distributed processing. Under this model a processing job is divided up into portions which are then submitted to remote “processing nodes”, which results in a significant improvement in overall throughput for large projects. Most commercial efforts, such as the ERDAS Ortho Accelerator, have focused on ortho processing. However there also several other photogrammetric tasks that lend themselves to distributed processing solutions (e.g. terrain correlation, point matching, etc.).

With data increasingly stored on network locations and the general adoption of database management systems, enterprise photogrammetric solutions will likely change the face of the classical photogrammetric workflow. With imagery and other geospatial data increasingly stored on servers, the processing framework is likely to change such that the operator interacts with a client application that kicks off photogrammetric and geospatial processing operations. Rather than running a heavy “digital photogrammetry workstation”, or DPW, the operator will be operating a client view into the project. Also, geospatial servers will enable organizations to store and reuse project and other data. For example, automatic correlation processes could automatically identify and utilize seed data stored in online databases, or terrain data stored from previous jobs. The notion of collecting data once and using it many times will be prevalent. Large quantities of data such as airborne and terrestrial LIDAR-derived point clouds will be able to be stored and have operations such as filtering, classification and 3D feature extraction applied to them. With a shift to enterprise solutions, industry adoption of open standards (e.g. Open GIS Consortium) will be critical. Providing open and extensible systems will allows organizations to customize workflows to meet their specific needs, fully enabling their investment in enterprise technology.

Conclusions

This is an exciting time for those of us in the photogrammetry group at ERDAS. Recent trends discussed above have opened up new avenues for changing, modernizing, and empowering what was until recently a relatively static workflow. Our customers drive us to deliver solutions that meet a variety of needs. While there is the constant need to pay attention to existing workflows, it is important to keep an eye on technology trends that will guide future workflow directions. Enterprise integration will likely change the face of classical photogrammetric workflows, making photogrammetry a ubiquitous component of modern geospatial business decision systems.

The 2009 program contractors include a group of commercial mapping firms that are all well-known in the North American mapping industry: 3001, Aerial Services, the North West Group, Photo Science, Sanborn and Surdex. It is interesting to note that the cameras used will be a mix of large-format frame and pushbroom sensors. 3001 has both a Leica Geosystems ADS40 (pushbroom) as well as an Intergraph DMC (frame), and I'm not sure which will be used for NAIP acquisition. Aerial Services and the North West Group operate Leica ADS sensors. Photo Science and Surdex operate DMCs while Sanborn operates a Microsoft Ultra Cam (frame). The photogrammetric processing workflows for frame and pushbroom sensors are quite different, with pushbroom sensors capturing long strips of imagery in a "pixel carpet" versus the traditional frame approach. However, it is good to see a mix of technology in use.

The 2009 program contractors include a group of commercial mapping firms that are all well-known in the North American mapping industry: 3001, Aerial Services, the North West Group, Photo Science, Sanborn and Surdex. It is interesting to note that the cameras used will be a mix of large-format frame and pushbroom sensors. 3001 has both a Leica Geosystems ADS40 (pushbroom) as well as an Intergraph DMC (frame), and I'm not sure which will be used for NAIP acquisition. Aerial Services and the North West Group operate Leica ADS sensors. Photo Science and Surdex operate DMCs while Sanborn operates a Microsoft Ultra Cam (frame). The photogrammetric processing workflows for frame and pushbroom sensors are quite different, with pushbroom sensors capturing long strips of imagery in a "pixel carpet" versus the traditional frame approach. However, it is good to see a mix of technology in use.